Tangelic Talks – Season 04 | Episode 06

AI, Energy & the Future of Sustainability: A Realistic Look w/ Chris Carter

13 minutes to read

Artificial intelligence is reshaping the world faster than most people can process — from how we communicate, work, and learn, to how we build climate solutions. But with its explosive growth comes a looming question:

Can AI truly support a sustainable future, or will it create new risks we aren’t prepared to manage?

In this episode of Tangelic Talks, hosts Victoria Cornelio and Andres Tamez sit down with Chris Carter, Chairman and CEO of Approyo, a global technology services company operating deep inside the world of enterprise systems, data centers, and emerging technologies. With more than 40 years in the tech space, Chris brings a rare blend of optimism, caution, and practical insight — cutting through hype to reveal what AI can (and cannot) solve for climate and sustainability.

This blog breaks down the most important insights from the conversation, offering a grounded, human-centered perspective on the rapidly changing world of AI.

AI, Data Centers & the Cost of the Digital World

Chris begins by grounding the conversation in a truth most people overlook:

AI lives inside data centers — and data centers are massive consumers of energy, water, and infrastructure.

From cooling systems to server loads to battery storage, today’s AI operates inside an ecosystem that requires:

- Enormous electricity

- Constant water supply

- Land and materials

- A 24/7 backbone of monitoring and maintenance

Chris explains that the average person has no idea how resource-intensive data centers truly are — not because they’re hidden intentionally, but because:

- People don’t ask,

- Tech companies don’t explain, and

- The topic sounds too technical to be accessible.

This lack of awareness creates unnecessary fear, misinformation, and polarized responses — from “AI will save the world” to “AI will destroy everything.”

His take?

AI’s environmental impact is real — but understanding it is the first step toward regulating it responsibly.

Why We Fear What We Don’t Understand

When Victoria and Andres ask why society isn’t informed about AI’s environmental footprint, Chris is candid:

- Some people intentionally exaggerate fears to push an agenda

- Others hype up AI without acknowledging its costs

- Many simply build fast and communicate poorly

The result is a public discourse full of:

- Panic about job loss

- Misunderstanding about energy use

- Suspicion about automation

- Wild narratives about killer robots

Chris doesn’t dismiss these fears. In fact, he shares openly:

“I’m still scared of AI. Truly. We haven’t even stepped into the dugout to put our uniform on.”

His analogy is powerful:

We’re not even at “batting practice” yet with AI development. The tools are early. The risks are early. The mistakes are early. And yet — the hype is massive.

Which brings us to one of the episode’s biggest concerns.

The AI Arms Race: Innovation Without Guardrails

AI’s growth is being driven not by responsible planning, but by competition:

- Countries competing with each other

- Companies competing for market share

- Investors competing for returns

- CEOs racing to appear “cutting-edge”

This arms race has led to:

- Massive energy consumption

- Unregulated data scraping

- Poorly implemented AI systems in critical sectors

- Tech-driven layoffs simply to boost stock values

- Infrastructure demands that existing grids can’t support

And, as Chris notes, AI tools are being deployed where they don’t even make sense.

From power grids to corporate finance, companies are using AI simply because they feel pressured to — not because it’s the right tool.

This trend, he warns, is dangerous.

Why Most AI Projects Fail (and Waste Resources)

Chris cites a widely discussed MIT report showing:

93% of enterprise AI projects fail.

Mostly because:

- Companies don’t know what problem AI should solve

- Leaders misunderstand what AI can do

- Teams deploy tools without validation

- AI is used where existing systems already perform better

The result?

Huge financial waste, unnecessary energy consumption, and unrealistic expectations.

His advice is straightforward:

- AI should be validated, not blindly adopted

- AI must be paired with human oversight

- AI should solve actual problems, not imaginary ones

If it isn’t measurable, repeatable, tested, or necessary — it shouldn’t be implemented.

China vs the U.S.: Two Different AI Futures

One of the most fascinating insights Chris shares is the contrast between the U.S. and China:

The U.S. Model:

- Bleeding-edge innovation

- Extreme hardware requirements

- High energy consumption

- Constant need for massive datasets

The China Model:

- Smaller, more efficient datasets

- Lower compute requirements

- Faster iteration

- More sustainable scaling

China isn’t trying to build the biggest model — just an efficient one.

And that, Chris suggests, could reshape global power dynamics in AI development.

E-Waste, Energy & the Hidden Costs of AI

Andres brings up one of the biggest sustainability concerns:

AI accelerates e-waste and electricity demand at a dangerous scale.

Chris agrees.

He explains that companies are:

- Constantly upgrading hardware

- Building new data centers

- Increasing their carbon footprint

- Pushing renewable energy systems to their limits

Even with natural gas, nuclear, and solar improving, the demand outpaces the supply.

AI is not “carbon neutral” by default.

Not even close.

The Human Problem Behind the Tech Problem

Perhaps the most important insight from the episode is this:

“AI isn’t the problem. People are the problem.”

Chris and Andres unpack how:

- Tech CEOs push tools before they’re ready

- Companies use AI to justify layoffs

- Investors pressure unrealistic innovation

- Regulators lack any meaningful understanding

- Startups treat AI like a pump-and-dump bubble

This is why ethical concerns — from surveillance to misinformation to data scraping — remain unresolved.

The tool is powerful.

But the people directing it often lack ethics, knowledge, or long-term thinking.

Where Humans Should Never Be Removed

When asked where humans should always remain in the loop, Chris gives a grounded answer:

- Anywhere empathy is needed

- Anywhere real human decision-making is essential

- Anywhere social interaction defines community

He warns that tools like humanoid robots and VR metaverses risk replacing human connection, not enhancing it.

Chris is firm:

AI should never replace human relationships, caregiving, or community life.

The Data Dilemma: Reliability, Ownership & Exploitation

Victoria raises one of the most pressing issues in AI ethics:

How do we know AI data is reliable — and more importantly — ethical?

Chris explains:

- Most people unknowingly feed their data to companies

- AI models scrape the internet, including copyrighted content

- Private data becomes public domain through terms of service

- Competitors mix data unintentionally

- Companies use datasets they shouldn’t have access to

The fundamental problem?

AI is being trained on a polluted internet — including misinformation, bias, copyrighted work, and AI-generated content.

This threatens the long-term reliability of AI systems.

Regulation: The Missing Piece

Everyone in the conversation agrees:

Regulations on data, training methods, and end-user agreements must dramatically improve.

Currently:

- EULAs are exploitative

- Data scraping is unregulated

- Governments are behind

- Congress lacks technical literacy

- Companies self-regulate (which means they don’t)

Chris is blunt:

“Some of the people making laws about tech have no idea what they’re voting on.”

Without policy intervention, AI will continue expanding in unsafe, unsustainable ways.

So… What Should Organizations Do?

For overwhelmed companies trying to use AI responsibly, Chris recommends:

- Start with real problems — not hype

- Validate all outputs

- Use AI to enhance humans, not replace them

- Invest in tools built for enterprise (Snowflake, SAP, Perplexity)

- Train your team before deploying anything

- Look at sustainability impacts before scaling

- Avoid uploading private data blindly

His message is simple:

Good AI is thoughtful AI.

Bad AI is rushed AI.

AI & Climate Work: What Will the Next 10 Years Look Like?

Despite the risks, Chris remains optimistic.

He believes AI will:

- Improve environmental data modelling

- Optimize logistics and supply chains

- Strengthen grid forecasting

- Accelerate clean energy transitions

- Support climate research

- Enhance sustainability reporting

- Streamline carbon accounting

- Enable early detection of climate risks

Not because AI is magic — but because humans will learn to use it well.

His hope for the future is simple:

“We all live on one planet. If we use these tools with good intentions, we can make it better for everyone.”

Final Thoughts

This episode is a crucial reminder that AI is neither savior nor villain.

It is a tool — powerful, imperfect, and evolving.

Chris Carter shows us that the future doesn’t require blind optimism or fear.

It requires:

- Awareness

- Responsible leadership

- Ethical boundaries

- Better regulation

- Human-centered innovation

Most importantly, it requires people willing to ask difficult questions — just like the ones raised in this conversation.

Thought Provoking Q&A Session w/ Chris Carter

"The most important thing to remember is that it's a tool. It's not a replacement for human thought or human creativity. It's a tool to help us be more efficient, to help us make better decisions, and to help us solve complex problems. But at the end of the day, it's the human behind the tool that matters. We have to have the right intentions and the right ethics in place".

"I truly feel that people have good intentions to better themselves, to better other human beings, and to better the world altogether. We have to be careful, but we also have to be optimistic. We need to use data and emerging technologies to boost efficiency and unlock better business outcomes that actually help the planet. It’s about integrating these things in a good way".

"I think it's going to change how we work, but it's not going to replace us. It's going to allow us to focus on the things that humans are actually good at—like empathy, creativity, and complex problem-solving. AI can handle the data and the automation, but it can't handle the 'human' side of sustainability. We need to embrace the change and learn how to work alongside these systems".

"No matter what your color, your creed, your religion, your sexual—it doesn't matter. We all are one planet. It's the only planet we know that sustains life. And we are one life altogether. So I really hope that everybody takes it and understands the betterment that we can all do to make this one planet so much better".

"You have to start with the data. If you don't have good data, you're not going to get good results. But more importantly, you have to start with a clear understanding of your values. What are you trying to achieve? If your goal is just to make money, then AI might lead you down a dangerous path. But if your goal is to create a more sustainable and equitable world, then AI can be a powerful ally".

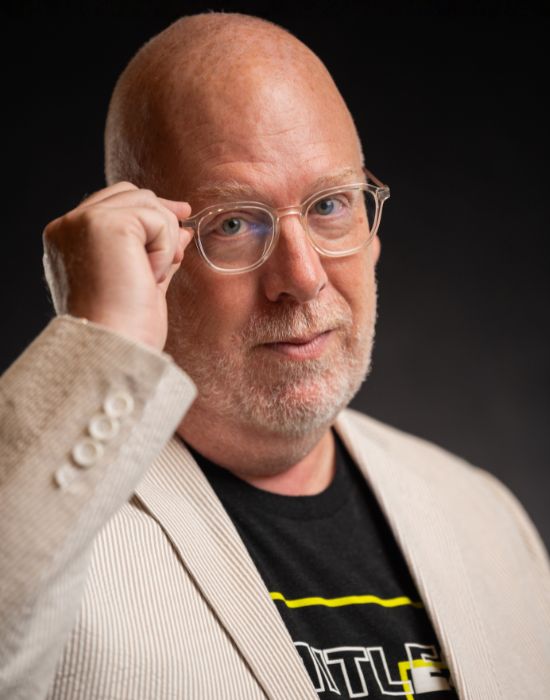

Chris Carter

Chris Carter’s long carreer in tech and leading the industry’s evolution

Chris Carter is the Chairman and CEO of Approyo, a global technology services company operating at the forefront of large-scale enterprise systems. Widely recognized for his expertise in using data and emerging technologies to boost efficiency and improve decision-making,

Christopher is widely recognized for leveraging data, AI, and emerging technologies to modernize enterprise systems and improve business outcomes. He is also a co-founder of Impala Ventures, an investment firm supporting startups and entrepreneurs in the IT sector.

A graduate of the Georgia Institute of Technology, Christopher has received numerous awards and international recognition throughout his career. His most recent honor is being named 2026 Top Tech CEO of the Year, highlighting his impact and leadership in the technology industry.